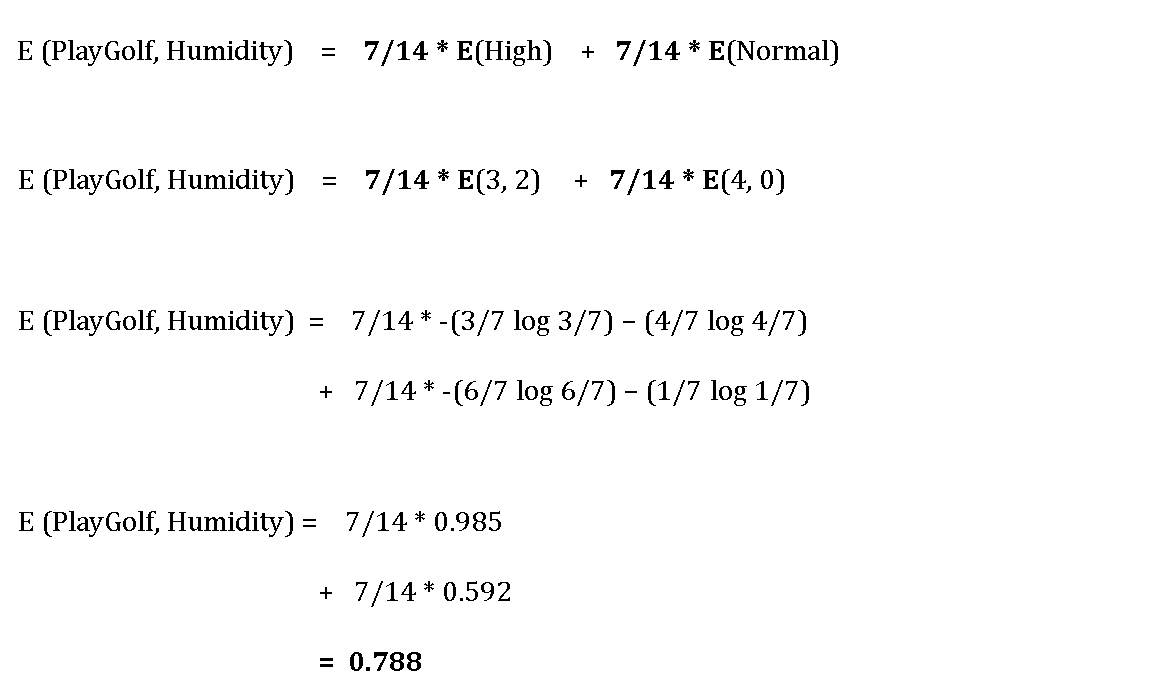

We are constantly working to improve the performance and capabilities of the calculator. In the context of Decision Trees, entropy is a measure of disorder or impurity in a node. It provides a visual representation of the decision tree model, and allows you to experiment with different settings and input data to see how the model performs. The decision tree classifier is a valuable tool for understanding and predicting complex datasets in machine learning applications and in data analysis. This article will demonstrate how to find entropy and information gain while drawing the Decision Tree. When adopting a tree-like structure, it considers all possible directions that can lead to the final decision by following a tree-like structure. This can be particularly helpful if you are new to decision trees, or if you want to quickly and easily explore different decision tree models and see how they perform on your data. A decision tree can be a perfect way to represent data like this. By limiting the data size, we can ensure that the calculator is fast, reliable, and easy-to-use. I’m going to show you how a decision tree algorithm would decide what attribute to split on first and what feature provides more information, or reduces more uncertainty about our target variable out of the two using the concepts of Entropy and Information Gain.

While this limitation may be inconvenient, it also has some benefits. This is a provisional measure that we have put in place to ensure that the calculator can operate effectively during its development phase. The online calculator and graph generator can be used to visualize the results of the decision tree classifier, and the data you can enter is currently limited to 150 rows and eight columns at most. This results in a visual representation of the decision tree model, which can be downloaded and used to make predictions based on the data you enter. The decision tree classifier uses impurity measures such as entropy and the Gini index to determine how to split the data at each node in the tree. The depthof the tree, which determines how many times the data can be split, can be set to control the complexity of the model. The decision tree classifier is a free and easy-to-use online calculator and machine learning algorithm that uses classification and prediction techniques to divide a dataset into smaller groups based on their characteristics. And people do use the values interchangeably. Information theory is a measure to define this degree of disorganization in a system known as Entropy. Learn about decision tree with implementation in python. To reinforce concepts, lets look at our Decision Tree from a slightly different perspective. Decision tree is a graphical representation of all possible solutions to a decision. Taken together, the three sections detail the typical Decision Tree algorithm. A Free Online Calculator and Machine Learning Algorithm 9 Answers Sorted by: 77 Gini impurity and Information Gain Entropy are pretty much the same. Third, we learned how Decision Trees use entropy in information gain and the ID3 algorithm to determine the exact conditional series of rules to select.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed